Placements

Find out how to apply here.

-

Project institution:Project supervisor(s):Dr Thomas Jones (Lancaster) & Archie Dunbar (Lancaster)

Overview and Background

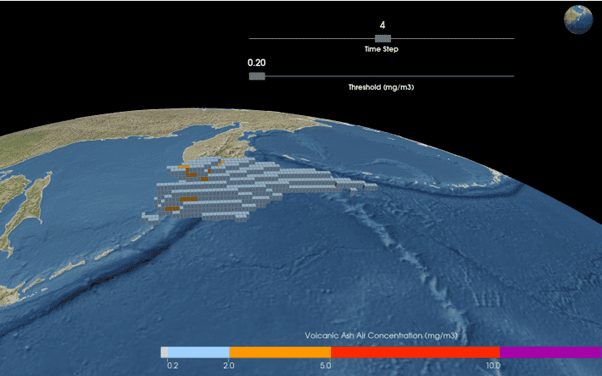

During a volcanic eruption, volcanic ash particles, consisting of fragments of volcanic rock and glass, are ejected into the atmosphere, where they can travel downwind for 100’s of kilometers, posing a significant risk to commercial aircraft and infrastructure. In the event of an eruption, the London VAAC (Volcanic Ash Advisory Centre), hosted at the UK’s Met Office, uses a numerical dispersion model called NAME (Numerical Atmospheric Dispersion Modelling Environment) to produce volcanic ash forecasts. NAME is initialized using numerical weather prediction data and a series of eruption source parameters (ESP’s), that describe the rate volcanic ash is released into the atmosphere, the height of release (plume height) and the ash particle size distribution (PSD). Uncertainty in the ESP’s is a leading cause of uncertainty in the forecasts.

It is not currently possible to directly measure the ash particle size distribution (PSD) during a real eruption, however Costa et al., (2016) showed that the PSD’s of historical eruptions could be fit to some standard, bi-modal distributions whose parameters showed a strong correlation with plume height and the average viscosity of the erupting magma. Thus, in principle, this could be used to estimate the PSD of an eruption in real time, based on plume height measurements and an estimation of magma viscosity.

Objectives

You will work within the volcanology research group at Lancaster University and closely with ExaGEO PhD student, Archie Dunbar. They will incorporate new global PSD datasets to improve the fits obtained by Costa et al., (2016). These improved fits will then be used by the student to calculate the PSD for a hypothetical eruption and code a framework to enable this. The calculated PSD will then be used as input for NAME atmospheric dispersion simulations (Jones et al., 2007), with the parameters describing the calculated distribution varied to assess their sensitivity to model outputs.

What you’ll do

- Improve the fits obtained by Costa et al., by incorporating new PSD data sets, including data obtained by Lancaster researchers.

- Use these improved fits to calculate PSD’s for a hypothetical eruption

- Use these PSD’s to perform a sensitivity study with NAME, identifying the key parameters describing the PSD fit that have the greatest impact on output concentrations.

This work will help quantify a key source of uncertainty in volcanic ash forecasts that is currently unknown and will directly contribute to the ExaGEO PhD work of Archie Dunbar.

Why apply

This 10 week placement gives you the chance to work on real volcanic hazard research with direct operational relevance. You’ll gain hands‑on experience with atmospheric modelling, data analysis, and scientific coding, while contributing to work used by the UK Met Office and the wider volcanology community. It’s a rare opportunity to develop valuable skills, work with active researchers, and make a meaningful impact on real‑world forecasting challenges.

References & Further Reading

Costa, A., Pioli, L., Bonadonna, C., 2016. Assessing tephra total grain-size distribution: Insights from field data analysis. Earth Planet. Sci. Lett. 443, 90–107. https://doi.org/10.1016/j.epsl.2016.02.040

Jones, A., Thomson, D., Hort, M., Devenish, B., 2007. The U.K. Met Office’s Next-Generation Atmospheric Dispersion Model, NAME III, in: Borrego, C., Norman, A.-L. (Eds.), Air Pollution Modeling and Its Application XVII. Springer US, Boston, MA, pp. 580–589. https://doi.org/10.1007/978-0-387-68854-1_62

You can find the application form here.

-

Project institution:Project supervisor(s):Professor Adrian Jackson (EPCC)

Overview and Background

High performance computing has a growing energy and power footprint, enabling world changing functionality but at an ever increasing cost. There are different types of computing available (i.e. CPUs, GPUs, FPGAs, AI Accelerators, etc…), which provide varied computing performance, functionality, and capabilities.

We are investigating novel approaches to implementing atmospheric simulations/models by exploring alternative algorithms that can provide the same functionality as existing mathematical solvers but using less computing resources.

To complement this, we’d like to explore existing algorithmic performance on different hardware to quantify the suitability and benefits of different types of hardware for different types of software. This project would benchmark and profile a range of hardware approaches using some common scientific software to progress towards that goal and forward our understanding of computing sustainability.

Objectives

- Measure and quantify the energy, power, and time performance of common mathematical solvers that form the core of atmospheric models on a range of computing hardware

- Explore novel computing hardware such as the Cerebras CS-3 processors

- Develop a power/energy prediction model for this type of algorithm

What you’ll do

You’ll plan and manage the experimental approach, solve problems as they arise porting and running on new hardware, and also looking to build performance and efficiency models across hardware types for the algorithms we are exploring.

Why apply

You’ll gain practical hands on experience on leading computing hardware and performance/efficiency evaluation of computing approaches.

You can find the application form here.

-

Project institution:Project supervisor(s):Dr Tiffany Vlaar (University of Glasgow)

Overview and Background

Technological advances have ushered in the era of big data in ecology.

Usage of deep learning and GPUs shows strong promise for more effective biodiversity monitoring, which is crucial for tracking and mitigating the effects of climate change.

However, many open questions remain about the generalization capabilities of deep neural networks and their behaviour under real‑world constraints.

In addition, the rising use of AI carries an environmental cost.

Training increasingly large models on large datasets demands extensive GPU hours and contributes significantly to carbon emissions.

This project will investigate the interplay between generalization and efficiency when applying deep learning to biodiversity monitoring.Objectives

Neural networks are typically evaluated based on their ability to generalise to new unseen data. In this project we will investigate which data is most important for achieving good generalisation performance. A potential proxy for data sample “importance” is an example difficulty score. We will consider different metrics for example difficulty and compare these across a common image benchmark dataset and the publicly available ecological dataset Snapshot Serengeti.

The main aim of the project is to investigate how this is affected by model pruning and other approaches towards model compression. This project will work towards more efficient and sustainable AI for biodiversity monitoring, by investigating what data matters and how this interacts with model compression techniques.

What you’ll do

You’ll be supported by your supervisor while also being encouraged to take initiative and develop independent ideas throughout the project. This will include tasks such as pre‑processing the ecological dataset, designing experiments to evaluate example difficulty, discussing and presenting your results, and critically engaging with relevant research papers.

Why apply

You will be trained in understanding existing machine learning approaches, and how to use these for biodiversity monitoring. Training will be delivered through regular meetings with the supervisor, along with coding, GitHub, and writing support. You will also be able to engage with the supervisor’s collaborators.

You are not expected to have prior knowledge of the subject area.

As part of the project, you will have the option to prepare a short (4 page) workshop paper, which can be submitted to a NeurIPS workshop or a similar conference. -

Project institution:Project supervisor(s):Dr Paul R Eizenhöfer & Prof. Kathryn Elmer (University of Glasgow)

Overview and Background

Biodiversity patterns emerge through the interaction of biotic and abiotic processes, yet disentangling the interaction of habitat creation, landscape evolution and climate change over time remains a challenge; this placement supports a PhD project developing a mechanistic modelling framework to quantify how changing environments drive biodiversity, turnover and ecology.

Generating spatially and temporally continuous, environmental layers classified in terms of ecological and landscape variables at regional scale is a necessary pathway to better understand how the present-day ecology emerged from the geological past. This placement aims to link ecology and landscape characteristics through an AI-driven, supervised classification using seasonal satellite imagery for the Himalaya / Tibetan Plateau system, feeding directly into the model validation of the associated ExaGEO project.

Objectives

This placement will directly support an ExaGEO PhD project that aims to simulate from the geological past to the present-day the co-evolution of landscape and biota, and the ecological conditions based on plausible Earth System scenarios in time and space. However, such modelling efforts require validation through observation, most importantly that of the present-day.

Specific objectives:

- Identify key habitats, consequently species types and the degree of biodiversity, associated with specific geological, geomorphological and climatic conditions across the Himalaya / Tibetan Plateau system at seasonal time scales using satellite remote sensing data. The regional scale analysis would require GPU usage and machine learning approaches to accelerate the classification and correlation of habitat types from multiple decades of satellite imagery.

- Perform a detailed literature review and identify key floral and faunal species from public biological data bases (e.g., the GBIF and eflora databases) to support the findings above. This includes constraining key parameters for the species identified, e.g., dispersal and mutation variabilities, environmental requirements, seasonal behaviour). The latter will directly serve as an input into the modelling framework of the associated ExaGEO PhD project.

- Curate data and classification schemes, organise and curate the data into a form that can be integrated into the ExaGEO PhD project workflow in close collaboration with the PhD student.

Why apply

-

Exposure to an active research environment within a bleeding‑edge interdisciplinary project with a strong focus on computation and big data.

-

Opportunities to apply your existing skills in statistics and/or computer science to real‑world ecological challenges.

-

Experience conducting literature reviews and working with biological databases.

-

Collaboration, teamwork, and communication with active researchers across multiple career stages.

You can find the application form here.